Author: Maria Makurat (Human Rights Team), with a contribution from Julia Hodgins (Culture, Society & Security Team)

Introduction

Physical violence against women is a topic that is being addressed by several institutions and organizations but what about the cyber domain? Cyber violence is not a new concept but the coronavirus pandemic has brought about new challenges and one has even seen a surge of the issue. This was discussed by UN Women in a report stating that the Covid pandemic had an impact on online harassment. This drew attention to women experiencing online harassment which can have lasting detrimental effects. This article explores the developing issue of violence against women in the cyber-domain by first considering various definitions to then highlighting case studies by looking at reports, literature and case studies in order to suggest possible questions that remain.

Defining violence against women in the cyber domain

Firstly, one needs to define what violence against women in the cyber domain entails. In the past years, there have been several definitions by scholars, institutions and organizations. It makes it challenging since what exactly do “aggression” and “violence” such as “hate speech” in the cyber domain mean? The discourse surrounding finding a definition of online gender-based violence shows that a strong debate exists however, as technology evolves, wider definitions are needed to include all forms of online violence.

When considering violence against women in the cyber-domain, then one automatically wonders what is “violent” in this case? Traditional international relations theories surrounding violence have been around for a while. Finlay for instance points out that one should not only consider “violence” by itself but extend it to “violent agency” with the following components: “defined first by a double intention (1) to inflict harm using a technique chosen (2) to eliminate or evade the target’s means of escaping it or defending against it. Second, the harms it aims at are destructive (as opposed to appropriative).”

Looking further at “aggression” and “violence” in relation to cyber, defining said terms has its challenges. "Defining “aggression” is a complex, in and itself controversial endeavour, as it relates to a tense exchange between at least two actors. Complexity grows as, increasingly often, aggressions become invisible - or blurry at the very least. Complications keep growing when the subject is situated in the scope of gender relations. Still now worldwide, at varying degrees, physical violence against women remains officialised, i.e., state violence exerted by the Iranian Moral Police to ‘rein in’ female transgressors is legal and inconsequential. Complications exponentially increase when translating gender relations into cyberspace, due to both inherent challenges of cyberspace (obscureness, non-territoriality/territoriality, low threshold for entry and exit, easy concealment) and the assumption of cyber being at least gender-neutral, if not male-dominated by default. Nevertheless, constructivism suggests that security is not neutral as social factors (ethnicity, gender, age, nationality, class, etc.) allocate power, and power between actors underpins exchanges, particularly aggressions. To define aggression, exchanges are often de-constructed, and contrasted to a threshold set under the influence of power stances, perceived vulnerabilities, and mindsets about the actors in question."(contribution by Julia Hodgins).

There seems to be growing concern about online violence against female journalists and a need for guidelines on how to monitor, and evaluate this issue. This can be highlighted by looking at the recent guidelines and a report published by the OSCE in 2023 which provides a definition of what exactly “gender-based online violence” in relation to female journalists means: “sexist and misogynistic involving frequently threats of physical and/or sexual violence; sexualized abuse and harassment; digital privacy and security breaches that can expose identifying information and exacerbate offline safety threats facing the target; and networked or mob harassment.” (…) often bound with gendered disinformation.” Furthermore, the OSCE identifies eight features of gender-based online violence: misogynistic, frequently networked, it radiates, it is intimate, it can be extreme, behave like ‘networked gaslighting’, extreme in intersectional discrimination and contains disinformation.

Looking at definitions discussed by scholars, Lews, Rowe and Wiper looked at the issue from a criminology point of view stating that there are gaps in the literature and a “failure to develop a robust gendered analysis, a lack of comparative analysis of online and offline VAWG and a lack of victimological examination of online abuse experienced by women and girls.” A press release by the European Council in November 2021 stated again, that one needs clearer definitions of what online gender-based violence means in order to then have more concise laws put in place. The recommendation states that one should define the issue as “the digital dimension of violence against women.”

The latest definition by UN Women defines online-violence against women as follows: “Technology-facilitated gender-based violence (TF GBV) is any act that is committed, assisted, aggravated or amplified by the use of information communication technologies or other digital tools which results in or is likely to result in physical, sexual, psychological, social, political or economic harm or other infringements of rights and freedoms.” Notably this definition extends the scope in order to include any act in relation to online violence.

As one can see, definitions are still being worked out and this is also an essential process when wanting to put stronger laws in place. States need international definitions in order to also have joint measurements against online violence. In the following, case studies of online violence will be highlighted to discuss the still pressing-issue.

Case studies of online-violence and future concerns

The issue of violence against women in the cyber-domain started very early and continues to be a growing threat and pressing issue today. Gurumurthy and Menon highlighted the said issue in 2009. They point out women (in India for example) having been filmed during rape and then posted on social-media platforms in order to maintain the cycle of violence. Another issue they discuss is that women have committed suicide in Kerala as a result of online harassment causing a stir in discussions.

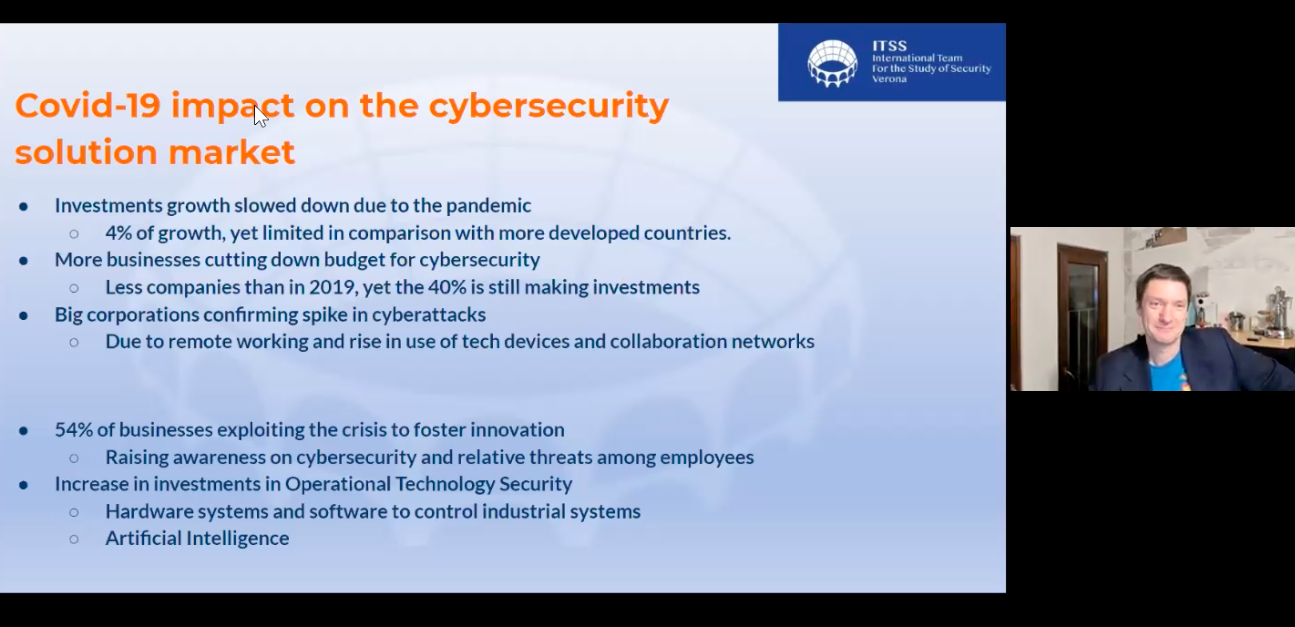

UN Women released a report in 2015, stating that urgent action needs to be taken in order to combat violence against women in the cyber domain. The report calls out the failure of implementing sustainable goals and achievements in reducing online violence against women and proposes that one needs better sanctions, a sensitization by implementing trainings and campaigns to change social attitudes as well as a more responsible internet infrastructure. Despite these reports, one has seen a significant impact of the corona pandemic on online violence against women. Reports have shown that women experience an increasing amount of online violence: “Cyber harassment and cyberbullying have increased by 50% during quarantine in Australia. Simultaneously, the United Kingdom data shows that the number of complaints about visual sexual harassment doubled in March 2020.”

Online violence against women is very complex and many factors play a role which means, tackling the issue needs sustainable goals that also address several factors. There is growing concern about violence against women working in politics or other public sectors. Women who express their opinions online very often receive violent threats and are coerced into retreating from the public sector and keeping a low profile. Articles and reports state that there is even a concern about women retreating from the political sector. Moreover, there seems to be a relation between crisis and gender-based violence and the consummation of online porn. The Government Equalities Office has released research on the relation between pornography use and harmful sexual attitudes and behaviors. The reports come to the conclusion that pornography is one of the factors that “contribute to a permissive and conducive context that allows harmful sexual attitudes and behaviours to exist against women and girls.”

If one has been developing better definitions and implanting debates, then why does the issue continue to be a growing concern? These concerns and trends show that one needs stronger initiatives, sanctions, and focused debates to tackle the issue at hand. In the following, it will be briefly highlighted what projects have been launched to tackle online violence.

What are some initiatives?

UN Women launched 2020 a project called “Fireflies Campaign against Gender-Based Cyber Violence.” The campaign specifically addressed the issue of online gender-based violence during the coronavirus pandemic and had the goal to specifically use social media to draw attention to the issue and engage the public in the discourse. One of the key findings was that more women (81%) than men (70%) reported online harassment cases.

One major step that has been taken is the UK’s reform of online violence. A press release by The Government of the UK from the 23rd of June 2023 states: “Abusers who share intimate images without consent to face up to 6 months in prison.” Also, deepfakes were criminalized for the first time which has to be considered for future debate: “For the first time, sharing of ‘deep fake’ intimate images – explicit images of videos which have been digitally manipulated to look like someone else – will also be criminalized.” The reform has the goal to facilitate the prosecution of individuals who publish intimate images without consent. Now it would be the question if other states will follow suit in placing stricter laws against cyber violence. For instance, Germany doesn’t have a specific law against cyber violence yet. They include the offences in the general law of insult or threats.

In countries such as Rwanda and Tanzania, women increasingly (have to) use the internet for work. This has also increased violence against women in the cyber-domain and calls for the need for better laws and safer realms. An initiative called Women@web helps “journalists, politicians, and human rights activists, among others, who have been confronted with various forms of gender-based online violence.” It is stated again that ever since the corona pandemic, they have seen an increase in online violence. Furthermore, studies conducted by Women@web have found out that women often censor their own comments online to avoid “cyberbullying”. In order to tackle this, Women@web offers modules on: “digital rights, digital citizenship, digital platforms, digital security, digital storytelling and digital resilience. Focusing on these topics, regular training sessions are held for women in the four countries. The aim is to increase the overall digital literacy among women and empower them to remain in online spaces.”

Conclusion

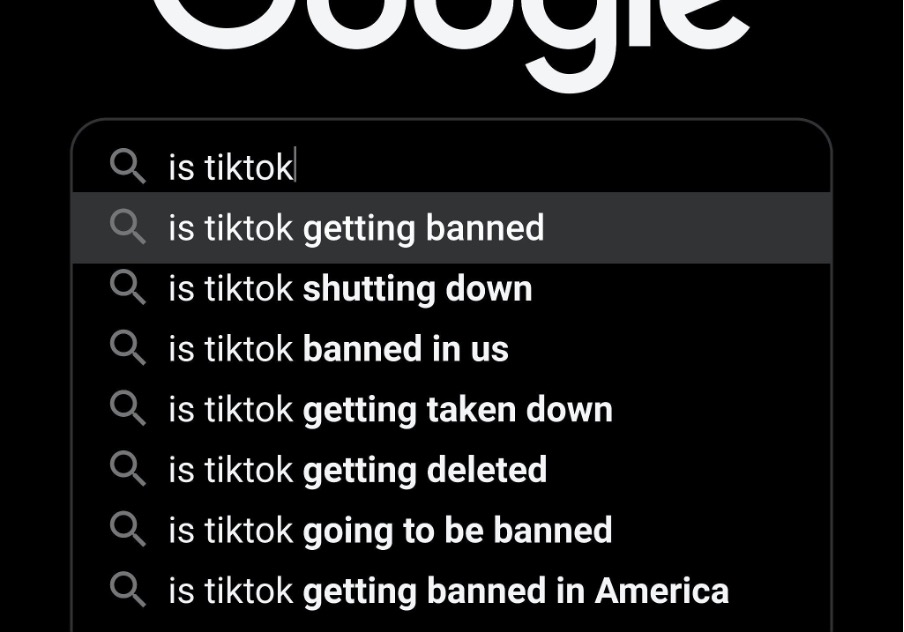

These initiatives already draw a lot of attention to the issue at hand however, many questions remain such as whether the UK reform will bring other states to follow suit. Also, social media platforms such as Instagram and TikTok have started becoming stricter in their policies on what people can comment on and what not. Search engines track whether someone posts explicit language or sends explicit images. These are all measures that show steps in the right direction however the question remains, when a new crisis comes (such as the corona pandemic) will it contribute to another surge of online violence? Online violence against women is not a recent new topic but a steady emergent issue. With growing technology, women on the one hand have more access to online help lines and initiatives but on the other hand, are facing new threats such as AI in relation to ‘deepfakes’. This calls for stronger sanctions and perhaps more focused campaigns launched towards a young audience to educate on this issue and its repercussions.